Are LLMs Capable Of Spatial Reasoning? A Small Experiment

Table of Contents

LLMs have made significant advances in recent months. They have developed impressive reasoning capability which makes them good at agentic tasks.

This raises an interesting question: Are LLMs capable of spatial reasoning?

More specifically, can they navigate an environment using only indirect feedback? Do different models behave similarly?

In order to explore this, I decided to modify the classic hotter-colder game to see how well LLMs perform.

Setup

I created a grid environment of size 5x5. The start position and target position are randomized per round. The agent can move only along the x-axis or y-axis at a time. Each move can be in either a positive or negative direction. The agent uses the tool with any one of the 4 input strings: “x+”, “x-”, “y-” and “y+”.

The agent needs to make the first move. Based on it, one of the following types of feedback is given: “Hotter”, “Colder”, “Neutral” or “Illegal”.

Hotter means that you are getting closer to the target position. Colder means that you are moving away from the target position. Neutral means that there is no change in the distance.

Illegal is a special feedback that indicates one of two things:

- An undo move was attempted. a. For example, if a previous valid move was “x+”, the next valid move cannot be “x-”.

- An out-of-bounds move was attempted.

The distance between the points is calculated using Manhattan distance.

You can read the system prompt here .

What This Test Actually Measures.

This test follows these key constraints:

- No undo moves allowed

- The grid size is unknown to the model

- Strict tool calling format required

- No direct coordinates

- Only relative feedback

It evaluates a combination of abilities:

- Feedback driven search

Models must interpret “hotter/colder” signals and adjust direction accordingly. Since the coordinates of start position and target position are randomly generated, there is a possibility that both might be the same. This is a curveball to test whether LLMs can figure it out.

- Sequential decision making

Unlike the typical hotter-colder game, I have explicitly set the rule where LLMs cannot reverse their previous move. Otherwise, the game could be solved via a simple strategy: Keep going in 1 direction until you receive Colder or Illegal feedback. When you do, reverse your move and go in another direction.

By setting such a rule, I am forcing the model to use a strategy where they must commit to moves and plan ahead.

- State Tracking

If you read the system prompt, you will realise that I have not informed the agent/LLM about the size of the grid. So they need to figure out whether they hit a wall or attempted an undo move after receiving illegal feedback, by tracking their previous moves. This adds a layer of complexity.

- Tool user reliability

I am using a custom XML format to see how well models can follow this format over multiple turns since they need to act as an agent. If the model produces a tool call in an incorrect format, I am stopping the test right away and considering it as a failure.

Models Selected

For this test, I am using the models available on openrouter.ai . Due to budget constraints, I ran this test 20 times per model. I am not using any custom parameters such as temperature or top p for any of the models.

The following is the list of models that I have tested:

- Jamba-Large-1.7 by ai21

- Amazon Nova 2 Lite and Amazon Nova Premier v1 by Amazon

- Claude Opus 4.6 by Anthropic

- Ernie 4.5 21B A3B Thinking by Baidu

- Seed 2.0 lite by Bytedance

- Cogito v2.1 671B by DeepCogito

- DeepSeek v3.2 Speciale by DeepSeek

- Gemini 3.1 pro preview customtools by Gemini

- Mercury 2 by Inception

- Kat Coder Pro by Kwaipilot

- Minimax M2.5 and Minimax M2.7 by Minimax

- Devstral 2512, Mistral Large 2512 and Mistral Small 2603 by Mistral

- Kimi K2.5 by Moonshot

- Hermes 4 70B and Hermes 4 405B by NousResearch

- Nemotron 3 nano 30B A3B by Nvidia

- GPT 5.4 and GPT OSS 120B by OpenAi

- Xiaomi Mimo v2 pro and Xiaomi Mimo v2 Flash by Xiaomi

- Intellect-3 by Prime Intellect

- Qwen 3.5 35B A3B by Qwen

- Step 3.5 Flash by Stepfun

- Grok 4.0 beta by X-ai

- GLM 5 by Z-ai

Baseline

In this setup, I am using a 5x5 grid. Ideally, in 25 moves, an LLM should be able to reach any target position from any starting position. However, in this setup, I am not sharing the information about the grid size so LLMs would make out-of-bounds moves. After at most 4 such illegal moves, an LLM should be able to infer the size of the grid. So, including illegal moves, in a total of 29 moves, an LLM should reach the target position. I have set the max moves as 30.

The longest Manhattan distance in 5x5 grid is 8 which would be between (0,0) and (4,4). With the optimal play, an LLM should reach the target location in 8 moves. Since an LLM would need 4 illegal moves to figure out the boundary, it should be able to reach any target location in a maximum of 12 moves with efficient play.

So, if an LLM takes more than 30 moves, then it has failed. If an LLM is able to reach the location under 12 moves, then it has played efficiently.

I have also added 2 baseline approaches to compare against LLM: Random walk and “Smart” Solver. Random walk chooses any one of the 4 possible moves at a time and tries to reach the target position. “Smart” Solver is a sub-optimal solver which uses the last 2 moves and their feedback to determine how it should proceed.

Metrics

There are 2 things that LLMs should not fail at:

- LLMs should be able to follow the specified format in a multi-turn conversation with no errors.

- LLMs should be able to reach the target position in less than 30 moves.

InvalidTool Rate: Percentage of rounds with an LLM returning an invalid format for a tool call.

Failure Rate: Percentage of rounds with an LLM reaching Max Moves without reaching the target position.

Efficiency: If an LLM was able to reach the target position, how efficient was it?

Efficiency is calculated as: Average of (Total moves - Manhattan distance) of successful rounds. By this metric, the model should have a value lower than 4 if it is playing efficiently. This means that the models are not exploring blindly. They are forming directional hypotheses and refining them which indicates spatial reasoning.

In order to be successful, LLMs need to use an efficient strategy, keep track of their previous moves and the feedback they receive. They also need to be consistent at tool calling.

Results

Random Solver : Efficiency: 17.7

“Smart” Solver: Efficiency: 11.9

| Model Name | InvalidTool Rate % | Failure Rate (Max moves reached) % | Efficiency (if target position was reached) |

|---|---|---|---|

| gemini-3.1-pro-preview-customtools | 0 | 0 | 3 |

| deepseek-v3.2-speciale | 0 | 0 | 3.6 |

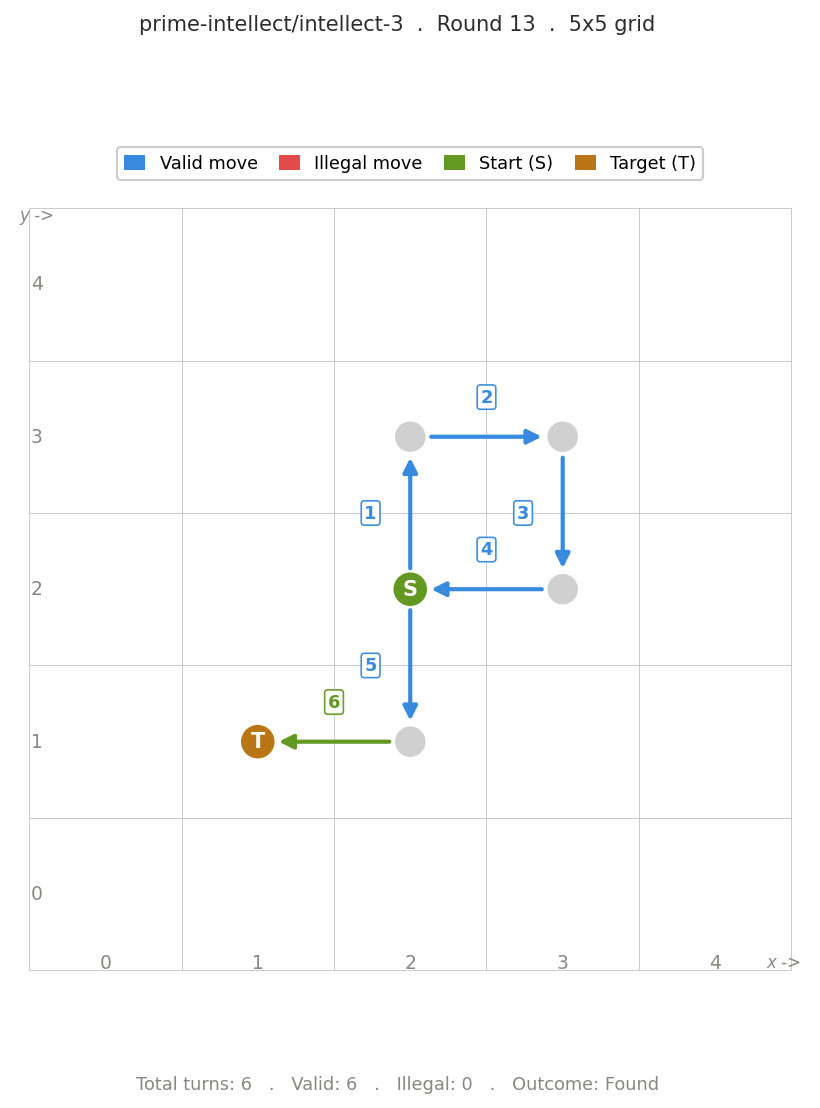

| intellect-3 | 0 | 0 | 4.15 |

| mimo-v2-pro | 0 | 0 | 4.6 |

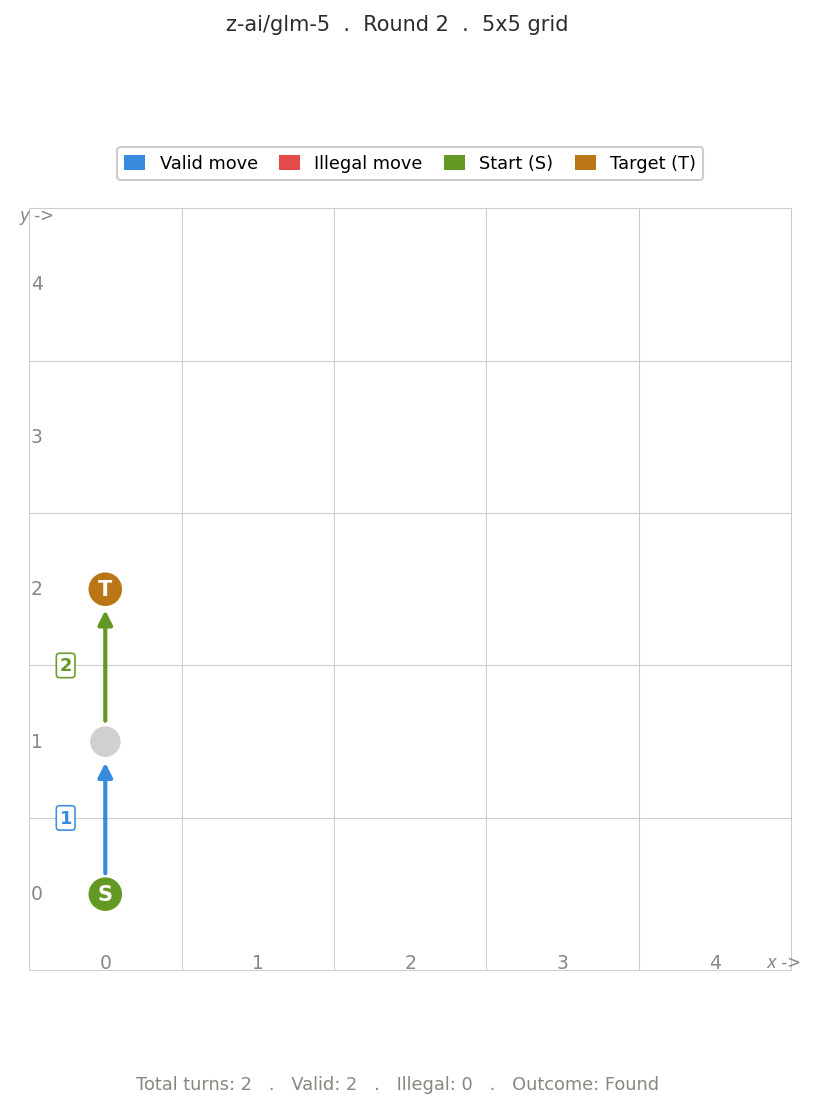

| glm-5 | 0 | 0 | 4.65 |

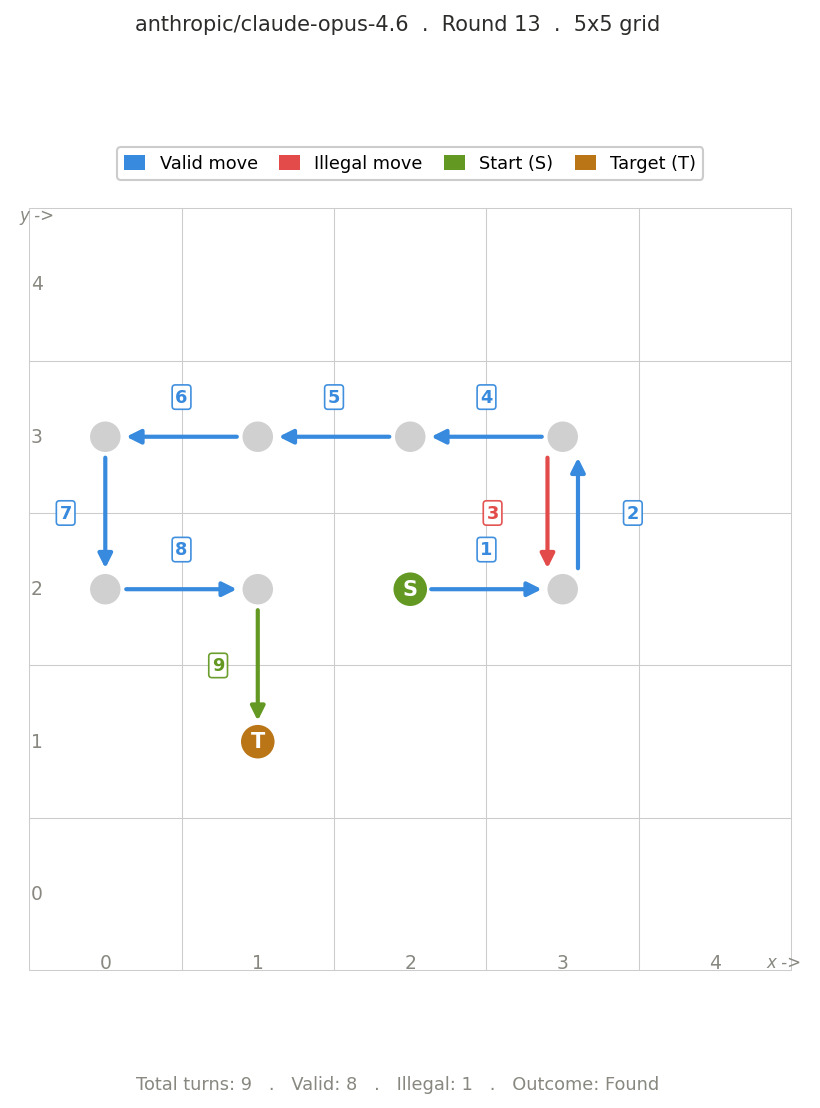

| claude-opus-4.6 | 0 | 0 | 4.95 |

| qwen3.5-35b-a3b | 0 | 5 | 5.79 |

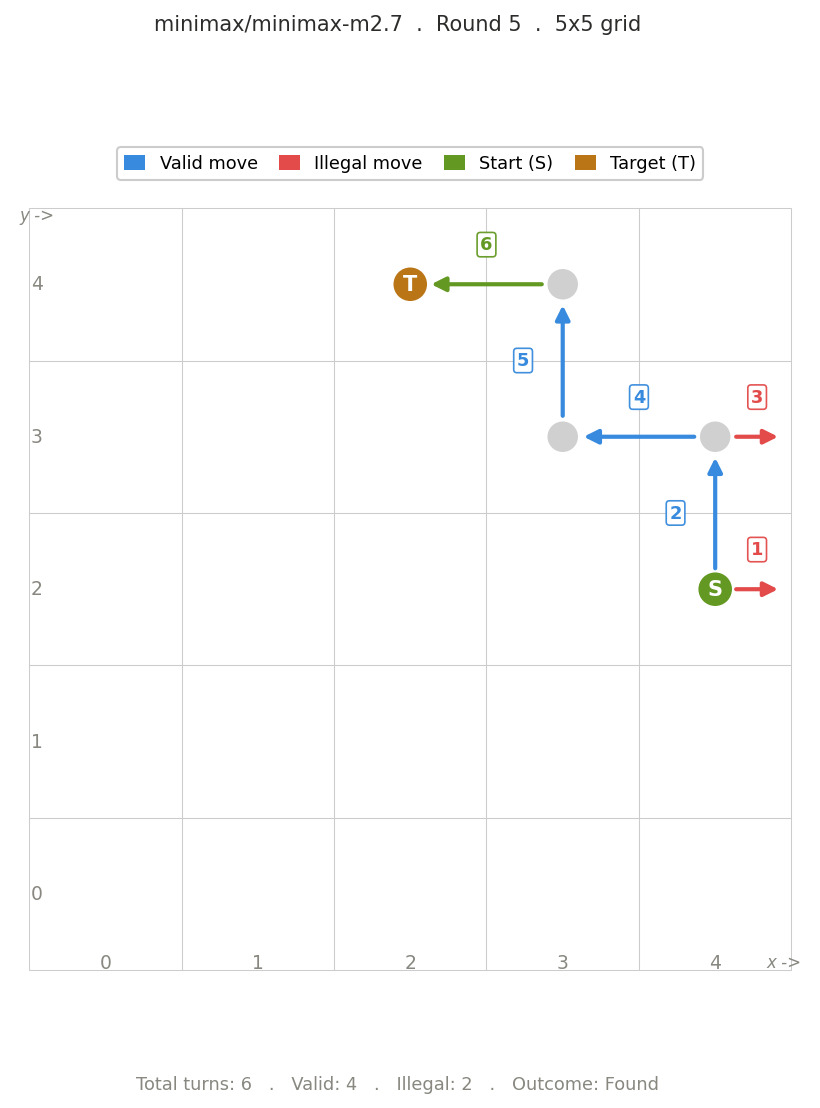

| minimax-m2.7 | 0 | 15 | 7.24 |

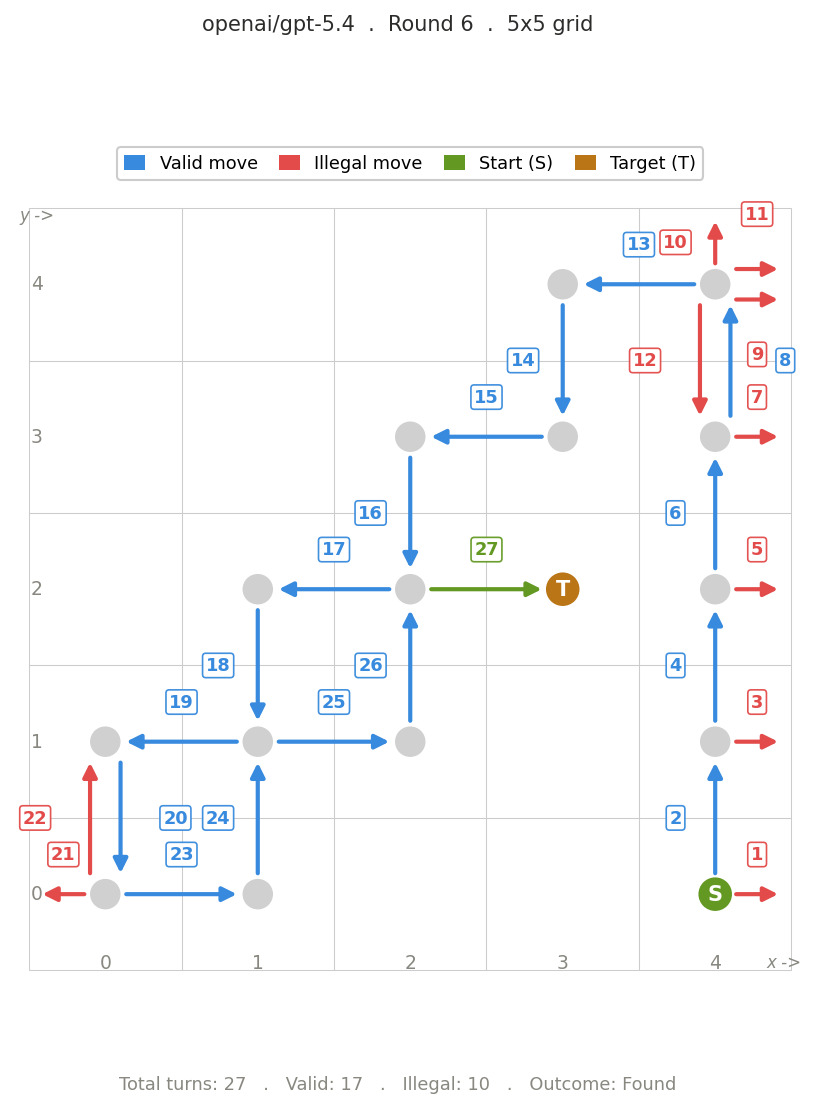

| gpt-5.4 | 0 | 20 | 11.12 |

| minimax-m2.5 | 0 | 25 | 7.4 |

| mistral-large-2512 | 0 | 25 | 8.53 |

| kat-coder-pro | 0 | 35 | 8.54 |

| grok-4.20-beta | 0 | 35 | 11.31 |

| seed-2.0-lite | 0 | 40 | 6 |

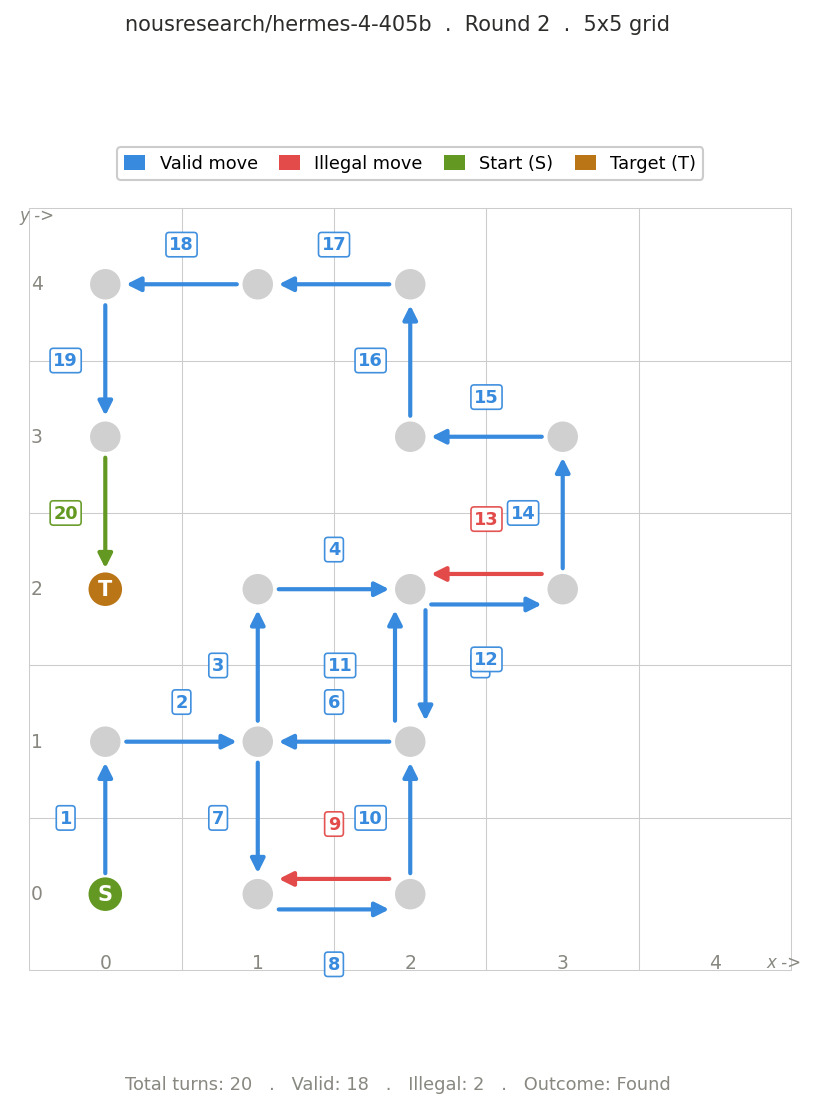

| hermes-4-405b | 0 | 40 | 11.67 |

| nova-premier-v1 | 0 | 50 | 5.5 |

| hermes-4-70b | 0 | 50 | 6.6 |

| devstral-2512 | 0 | 50 | 9.1 |

| kimi-k2.5 | 5 | 0 | 4.84 |

| mimo-v2-flash | 5 | 35 | 7.75 |

| cogito-v2.1-671b | 5 | 45 | 6.7 |

| mistral-small-2603 | 10 | 35 | 10.18 |

| ernie-4.5-21b-a3b-thinking | 10 | 55 | 7.43 |

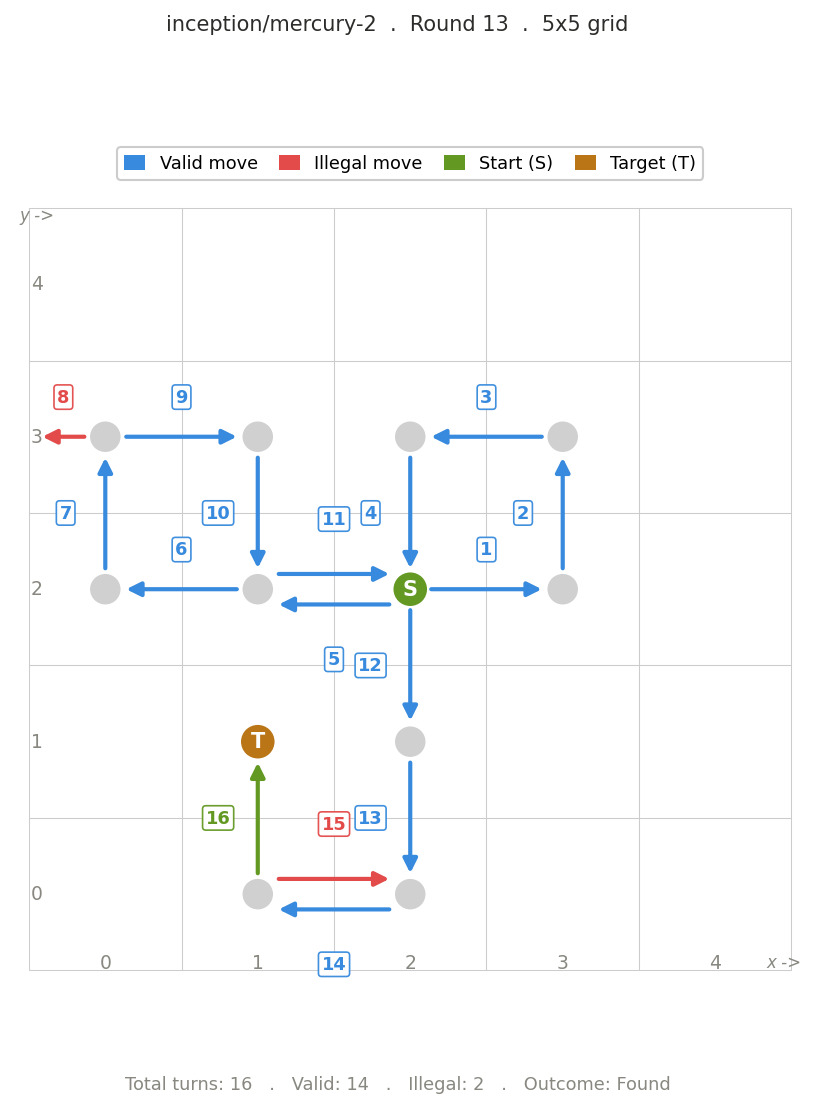

| mercury-2 | 15 | 0 | 4.35 |

| step-3.5-flash | 20 | 0 | 2.88 |

| jamba-large-1.7 | 30 | 35 | 11.43 |

| gpt-oss-120b | 35 | 0 | 5.54 |

| nova-2-lite-v1 | 40 | 35 | 3.8 |

| nemotron-3-nano-30b-a3b | 60 | 5 | 4.14 |

Analysis

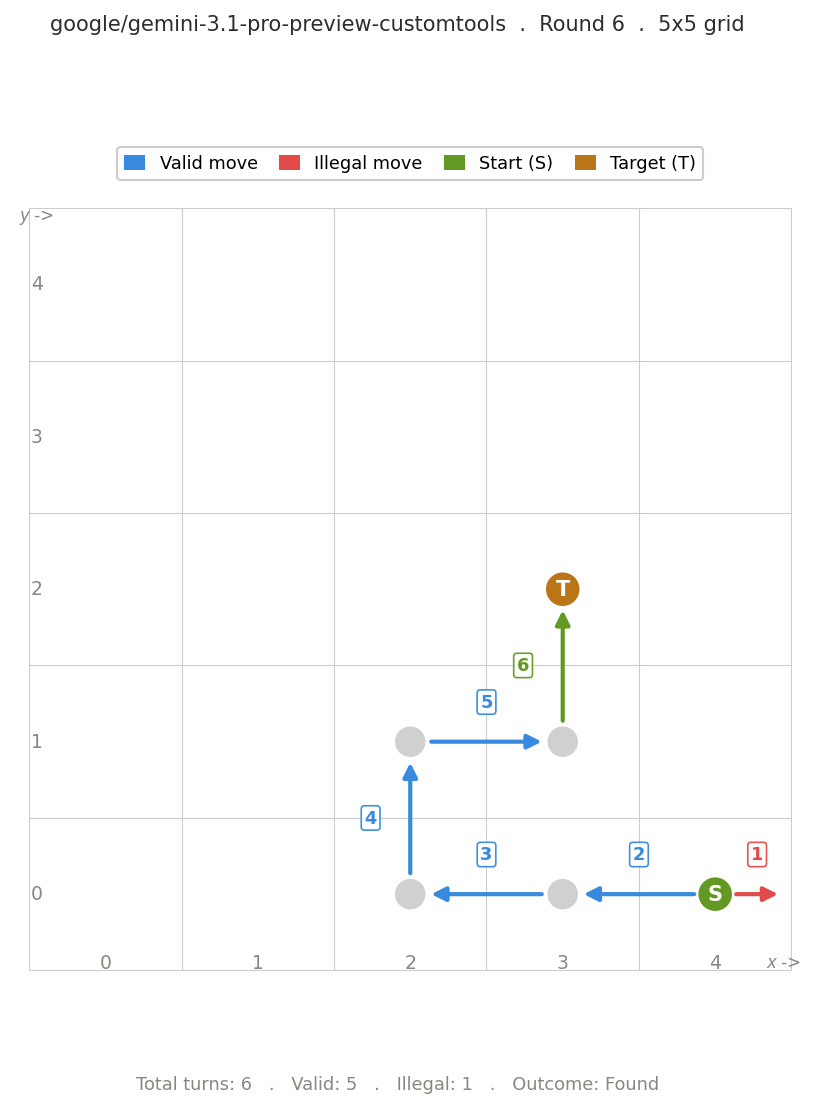

Some models follow effective strategies. The best performing model was Gemini 3.1 pro-preview-customtools. It had no invalid tool call or failure.

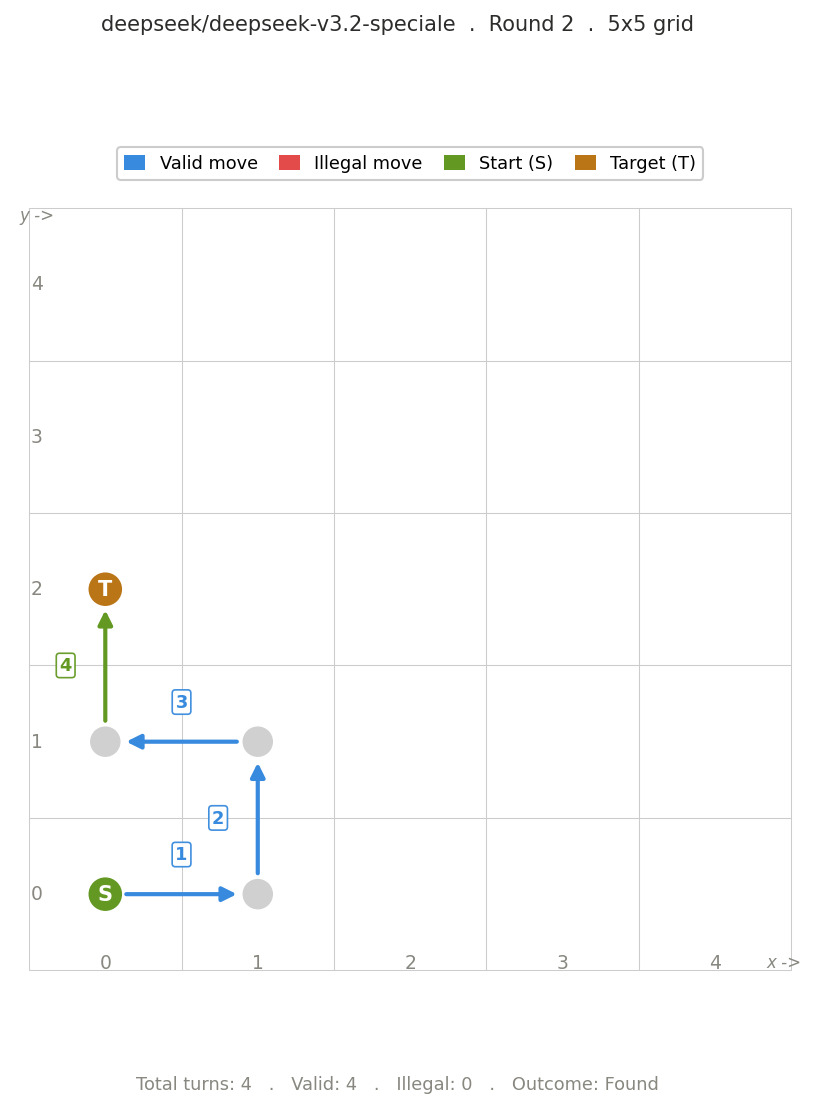

Open source models such as DeepSeek V3.2-Speciale isn’t far behind. It also had no invalid tool call or failure with an efficiency of less than 4.

Other models such as Intellect 3, Mimo v2 pro, GLM 5 and Claude OPUS 4.6 performed slightly worse than the top models.

It is quite interesting to see that the open source model Intellect 3, released in Nov 2025 was able to perform better than the models that were released later.

There are other models such as gpt-oss-120b, step-3.5-flash, mercury-2 and kimi-k2.5 which would have placed higher if they had been able to follow the custom tool call format.

It is quite clear that there is a real capability gap among models. Some models succeed almost every time. Some fail to follow the required format which is a major bottleneck.

Additionally, if you look at the reasoning traces of a few LLMs: Traces for round where the start position is same as target position

We can see the following:

- Models are able to consistently track the state.

- They are able to infer the environment from the feedback.

- They are able to form and update hypotheses regarding directions.

- They are able to operate under constraints over multiple steps.

The stronger models are able to make adaptive and stateful decisions.

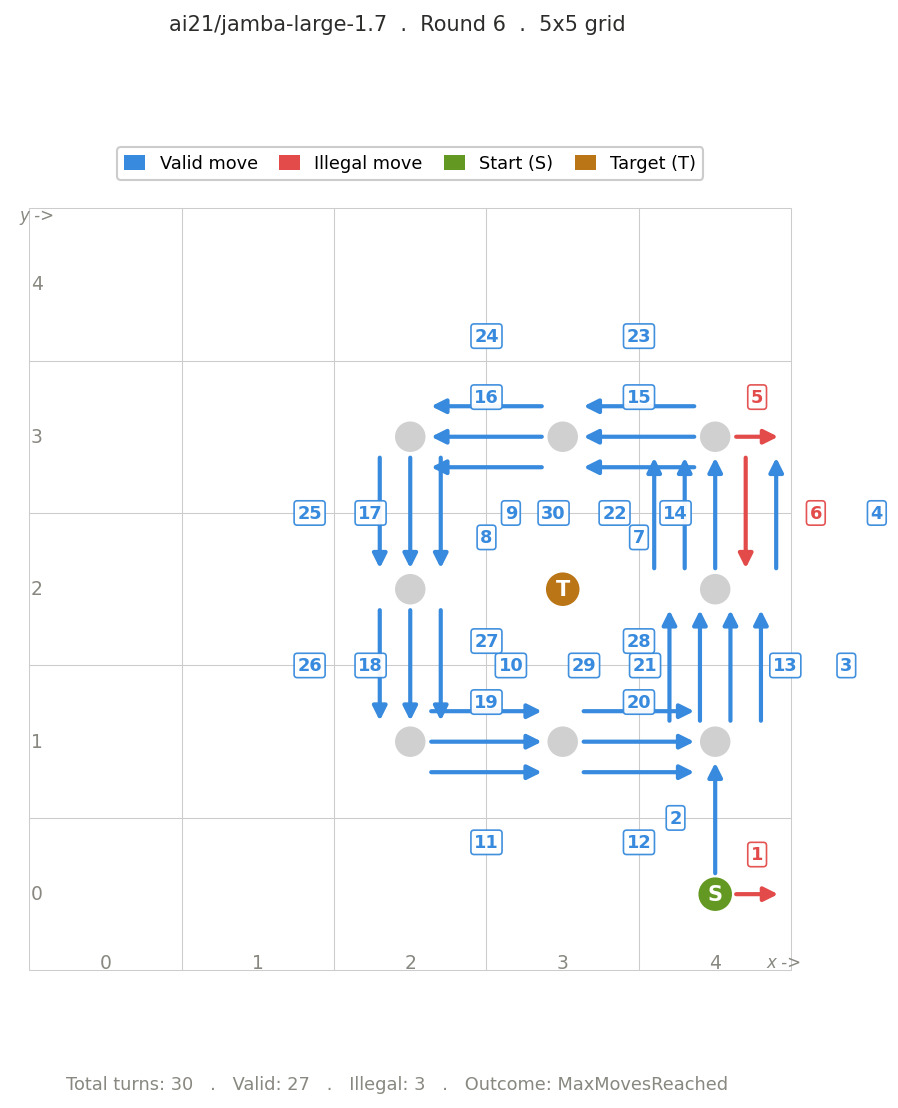

LLMs fail due to one or combination of the following reasons:

- Attempting undo moves.

- Getting stuck in a corner and unable to figure out how to get out of it.

- Getting stuck in a loop and unable to figure out how to break it.

- Failing to keep track of the moves and making incorrect choices.

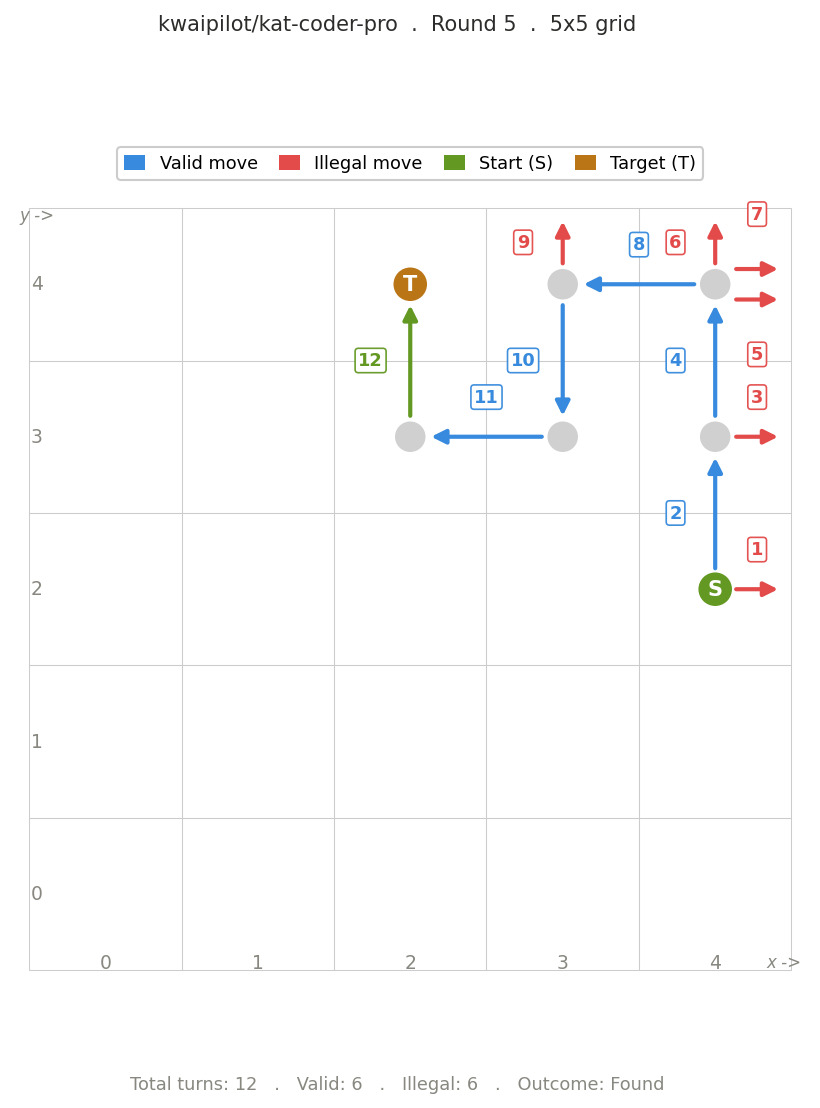

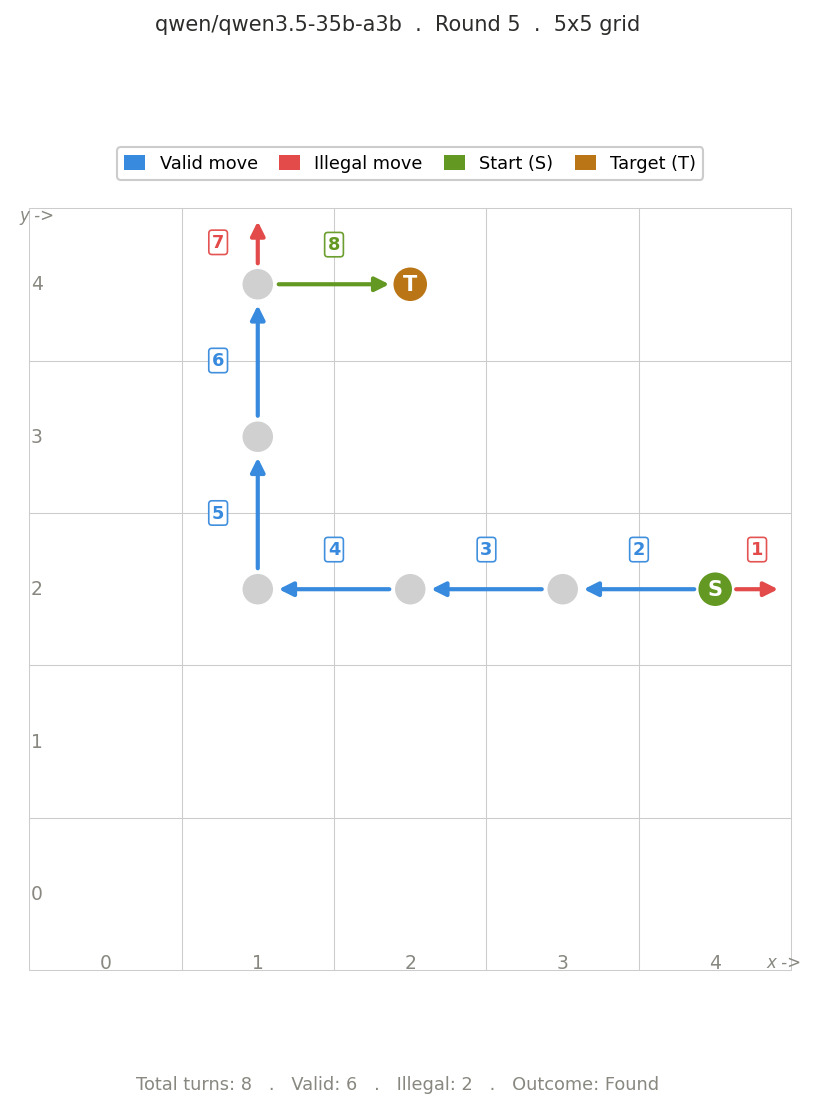

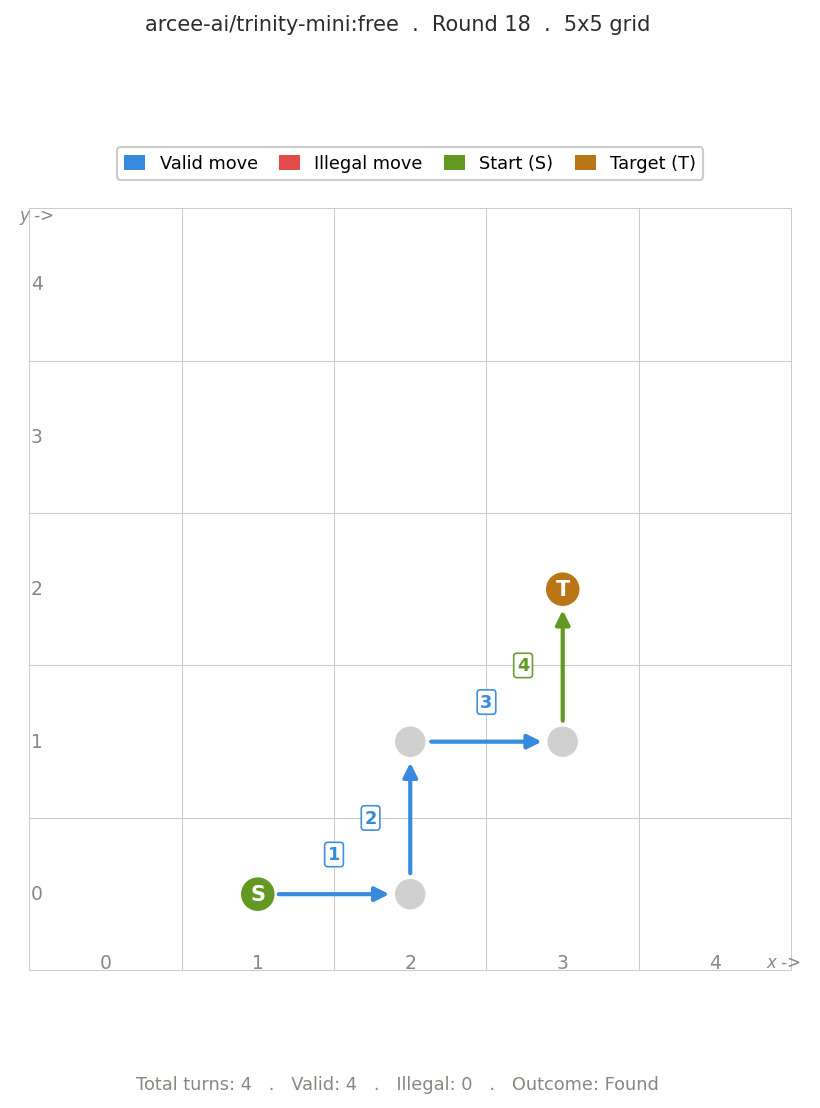

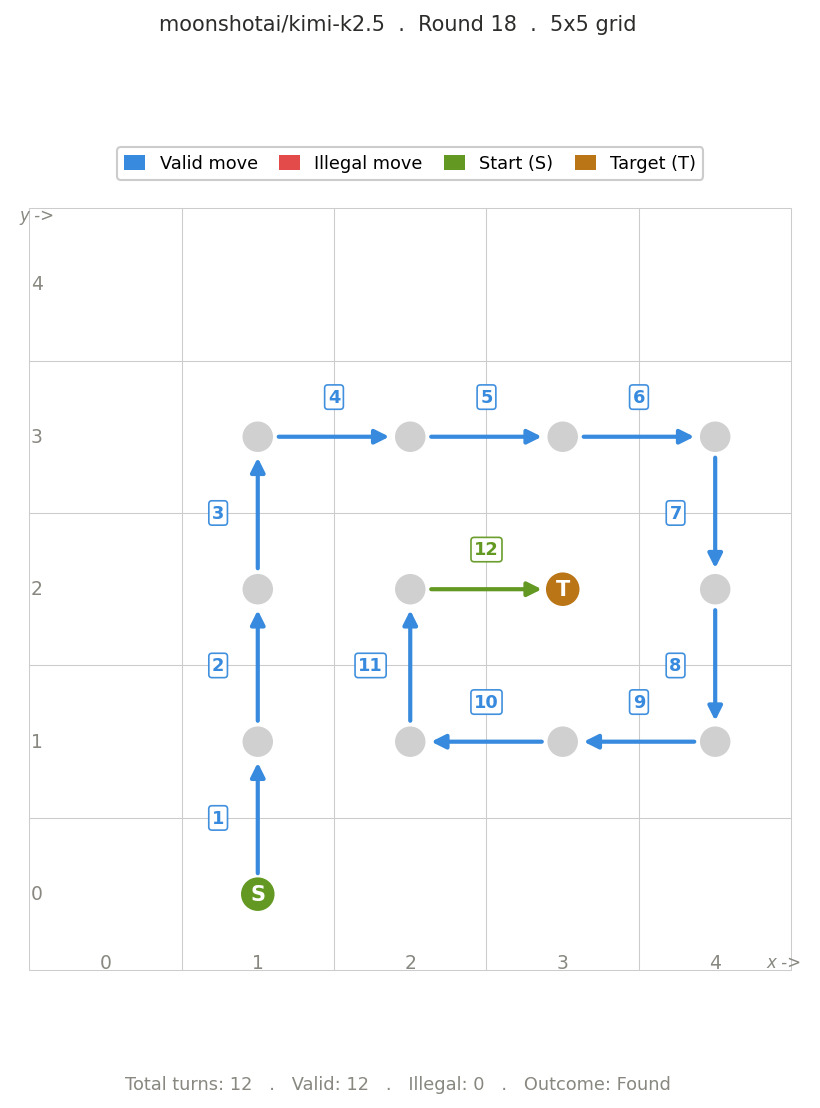

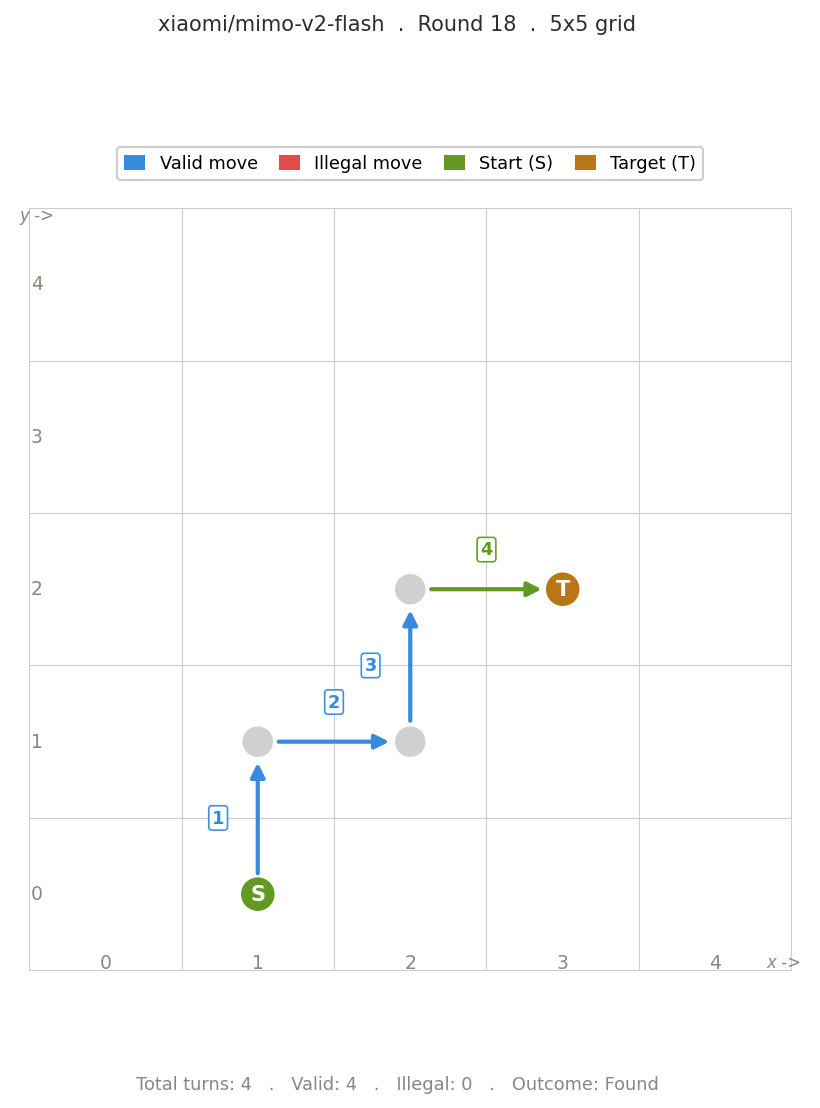

Visualizing the path of LLMs.

It is not feasible to add the images of the all the paths taken by all LLMs. I am sharing few of the paths that were taken by LLMs.

Based on these trajectories, it is clear that efficient models tend to move in straight lines along one axis after exploring the directions. This suggests that they break the problem down into simpler steps. In contrast, weaker models move more erratically, which indicates poor tracking of previous moves and less consistent decision-making.

Round 2:

Round 5

Round 6

Round 13

Round 18

Final Thoughts

This test had n=20, which is small but enough to indicate that LLMs may possess spatial reasoning. To be thorough, one would have to run this more extensively on all possible combinations of start and target positions with varying grid sizes such as 5x5, 10x10, 20x20, etc. One would also use size such as 5x10 to further push the models.

Due to budget constraints, I won’t be doing that. It was a simple experiment where I created a custom environment and tested if LLMs can navigate it based only on the feedback. You can check out the code : https://github.com/nhegde610/Hotter_Colder_Game

This indicates that in a constrained, feedback-driven environment, some models are able to navigate the environment efficiently by exhibiting consistent spatial reasoning behaviour.